In case you haven’t heard of Serverless yet, listen up. It might change the way you design and manage your applications – just like it did for us at Purple Technology back in 2017 when we adopted the technology. Now, three years later, 90% of our systems are powered by Serverless ⚡️.

Let me describe Serverless as offered by the cloud provider, Amazon Web Services. We decided to go with this one since it is the biggest player on the market.

What is Serverless?

The rest of the team and I put a lot of thought into how to define Serverless, and we came up with this definition:

Serverless is an expansive ecosystem of managed services with already defined design patterns. Once these components are connected, it allows the entire system to scale to zero and back within hundreds of milliseconds, depending on the traffic requirements.

However, this definition is not exactly helpful for a newbie, so stay tuned for loads of practical examples and demonstrations covered in the upcoming series of posts. For now, let’s just say that Serverless is an app which only runs when users are actually using it.

What can you create in Serverless

- REST API (Amazon API Gateway)

- GraphQL API (AWS AppSync)

- Cron - code that runs on schedules (Amazon CloudWatch Events)

- Static-hosted web page with CDN (Amazon S3 + Amazon CloudFront)

- NoSQL database (Amazon DynamoDB)

- Queue (Amazon Simple Queue Service) and much more…

Serverless revolution

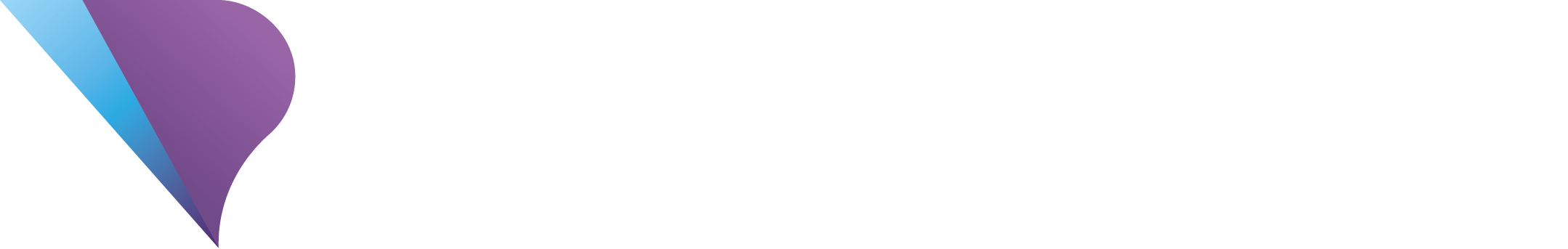

I dare to say that the birth of Serverless is just as groundbreaking as Cloud was in 2008. Even though the basic element which allowed the creation of Serverless was an advancement in container technologies, it’s not just about the technological advancement: it’s a complete transformation of the design and application management paradigm.

Visualization of technological evolution:

The birth of Serverless

This entire (r)evolution began in the fall of 2014 when AWS (Amazon Web Services) announced a new service called “AWS Lambda.” This service allowed developers to run the source code – the lambda function – without the need to manage any kind of physical or virtual infrastructure. This service itself wouldn’t have been as useful if AWS hadn’t also announced its own basic “event sources” alongside it – things like Amazon S3 bucket notifications, Amazon Kinesis stream records, and Amazon DynamoDB update streams. That was the beginning of a new chapter of cloud services called Serverless.

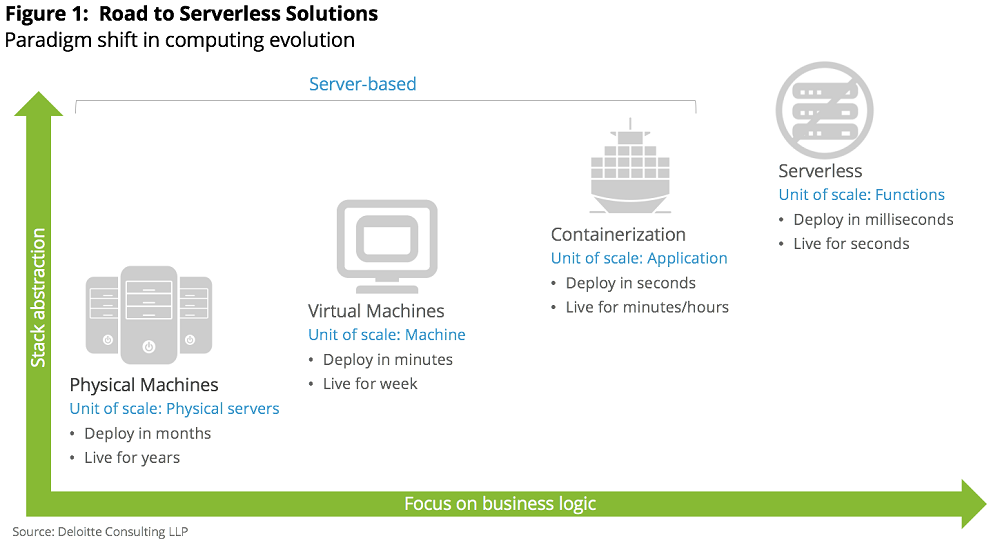

Serverless is not just Lambda

At first glance, it may seem that Serverless and Lambda are two interchangeable terms, which is not true. Lambda is just a piece of a large Serverless puzzle. For a Lambda function to be useful in practice, it always needs another piece of the puzzle – e.g. API Gateway, which takes care of any REST API endpoints that need to be connected to the Lambda functions.

Event sources

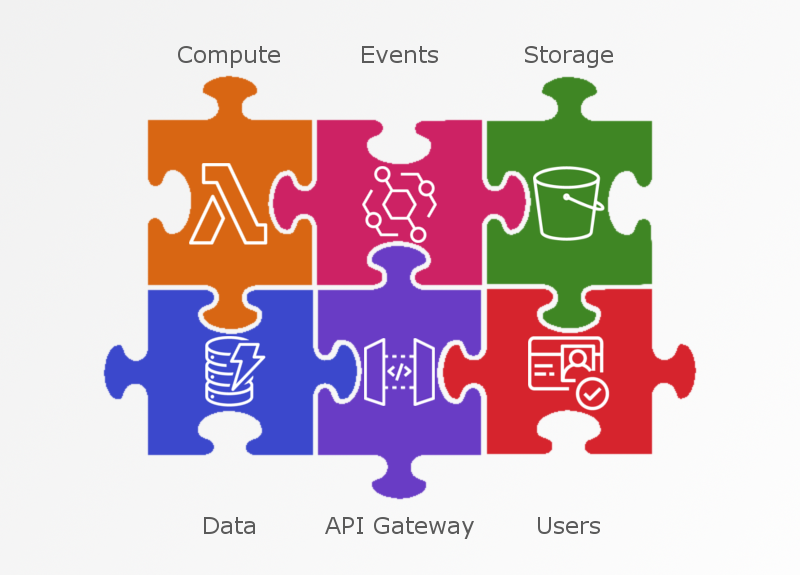

I mentioned the term “event source” in the paragraph about The birth of Serverless. Event source is one of the cornerstones of Serverless architecture. A Lambda function is always triggered as a reaction to an event – e.g. an upload of a picture to Amazon S3 file storage. The function then runs its code as a reaction, e.g. it creates a thumbnail of the uploaded picture.

There are two different ways to run lambda functions:

- synchronous – a service which triggers a lambda function and waits for its outcome, which then evaluates how to proceed further in the given process based on the result.

E.g. REST API requirement: request -> response - asynchronous – a service which simply triggers a lambda function, but does not wait for it to finish.

E.g. creation of thumbnail for a newly updated picture into S3

Managed services

One of the most prominent ideas in Serverless is the maximizing of the use of managed services and minimization of your own code in production, which helps you avoid technological debt and allows you to invest money into product development instead.

Managed services are services where the development, management and responsibility are outsourced to a third party. Take Amazon Relational Database Service as an example. This is a service which provides relationational databases such as MySQL, Postgresql, MariaDB, Oracle and others which are fully managed by Amazon. This means you don’t have to invest a fortune into updates, backups, safety of configuration, disaster recovery, read replicas, scaling and so on, and instead, you can spend the money on further development of the product.

Thanks to managed services, even relatively small teams can build and manage large projects. When you consider the alarming lack of IT specialists on the job market, this is a huge competitive advantage.

Running costs and scaling

Cloud popularized the pay-as-you-go model of pricing. The same applies to Serverless, but it takes it a step further.

In the AWS Lambda Serverless ecosystem, you pay for each 100ms when your code is actually running. To give you an idea, the cheapest block with a memory of 128 MB costs $0.0000002083.

Example: let’s say that we have an API Gateway endpoint connected to a lambda function with a memory of 128 MB, and the function takes 500ms to process the request and create a response. How many requests can we send to this API so that the final running cost is $1?

The result is 1/($0.0000002083*500ms) which is approximately 1 milion requests for $1.

Other Serverless services usually have a pricing policy based on “pay-per-request”.

In case of file storages, such as Amazon S3, an additional amount is charged for used storage space.

Naysayers claim that Serverless is overpriced, and that as soon as the app grows, the cost begins to increase as well. However, if you take the time to analyze this claim and do the math, you will find out that when using an alternative such as Kubernetes, you are not only going to be paying for the infrastructure where your clusters are running, but you will also have to pay people to manage these as well. In the end, you will come to the conclusion that this solution only pays off in case of really large traffic. There is also another reason to consider Serverless. Today, it is much easier to raise more money from investors than to find new employees on the exhausted job market.

Comparison

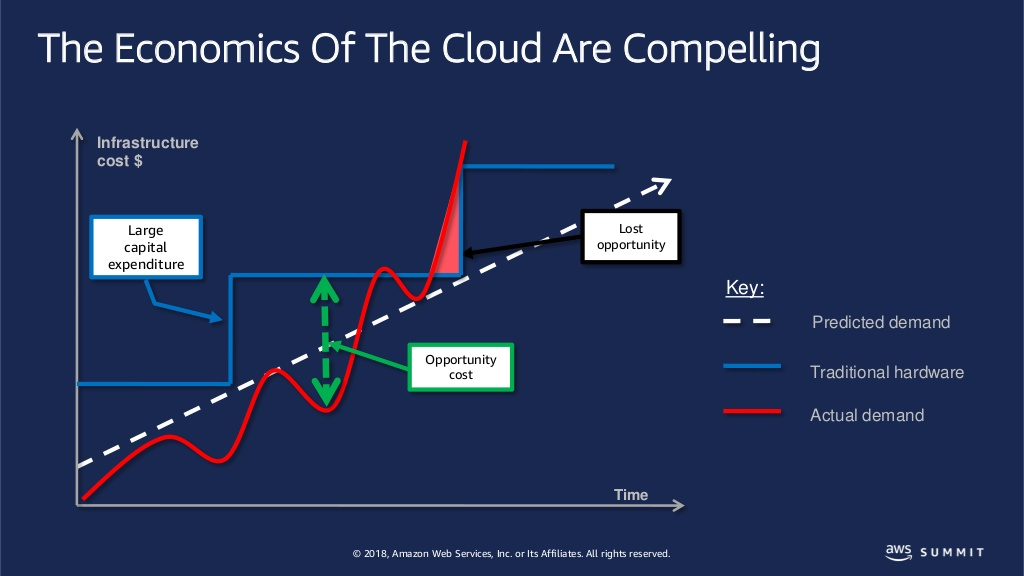

The smallest scalable unit in a classic cloud environment is a “single instance.” There might be an increase or decrease of a few dollars in infrastructure costs for several minutes.

See the blue line in the graph below.

In the Serverless ecosystem, the smallest scalable unit is a “execution of a Lambda function.” In this case, the infrastructure cost might increase or decrease by at least $0.0000002083 for 100ms minimum.

See the red line in the graph below.

Such flexibility gives Serverless its primary feature – immediate scaling to zero and back. This means that if nobody is using your app (or a part of it) in a given moment, your expenses are, at that moment, zero.

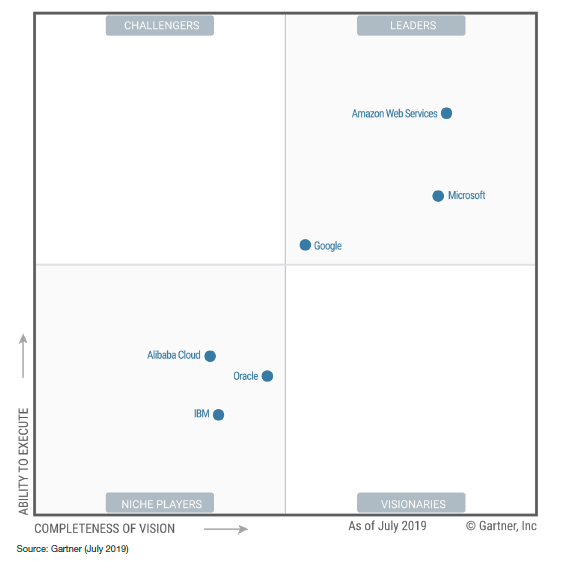

Market Overview

At the beginning, AWS was the only player on the market of Serverless technologies. Soon, Google joined with their Google Cloud Functions, as well as Microsoft with their Microsoft Azure Functions.

AWS is generally regarded as the market leader, especially when it comes to Serverless ecosystems. It is vital to explore different options and providers depending on your needs before you start with development. Focus on supported languages and runtime versions. It is highly likely though that you will find your way to AWS anyway.

Vendor lock-in

One of the worries surrounding cloud solutions is “vendor lock-in.” It is a fear that a company will become technologically dependent on one single cloud provider and a migration to another one (e.g. for financial reasons) would be out of reach, or even impossible, as it would require costly refactoring of infrastructure and applications.

A migration with basic sources, such as virtual instances, would only mean infrastructural changes. However, in case of a Serverless app it would most likely mean a refactoring of a part of an app, which makes the migration virtually impossible.

The result is that in case of Serverless apps you have to make peace with the fact that you will indeed have a strong vendor lock-in. Despite this, I still think that it's a good value for the price.

Frameworks

Even though you can manually set up whatever you like in cloud web consoles, it’s not the right approach when it comes to production applications. For this reason, there are frameworks which take care of the entire lifecycle of the application from starting from packaging, through deployment all the way to removal. These frameworks are mainly the tools the in Command Line Interface (CLI).

Most popular frameworks

Serverless Framework is the most popular community framework which was created sometime after AWS Lambda was made accessible to the public at the beginning of 2015. This framework supports AWS, Google Cloud, or Microsoft Azure, and contains an ample plugin ecosystem, which allows the developers to tailor it to their needs perfectly. This is the reason why we have decided to use it at Purple Technology as well.

AWS SAM (Serverless Application Model) is a framework which was created by AWS in the fall 2016. It might be suitable for beginners, but I would personally not recommend using it for larger production apps, as its adoption rate is minimal and, unlike Serverless Framework, it does not contain the option for extension via plugins.

Technically, it is more or less a mutation of CloudFormation templates with its own CLI for deploying and debugging apps.

AWS Amplify is a much more complex framework than the two aforementioned ones, as those only cover the backend part of an app. Amplify contains a spectrum of Javascript frontend libraries that manage things like communication with REST/GraphQL API, authentication of users and so on. It really comes as a full package for developers to make use of.

Summary

We at Purple Technology are great enthusiasts of Serverless technologies, and I am not afraid to say we are pioneers to some extent as well.

In case you have any questions, feel free to contact me at Twitter @FilipPyrek.

With ❤️ made in Brno.